DSharp Studio – Model it.

That’s it.

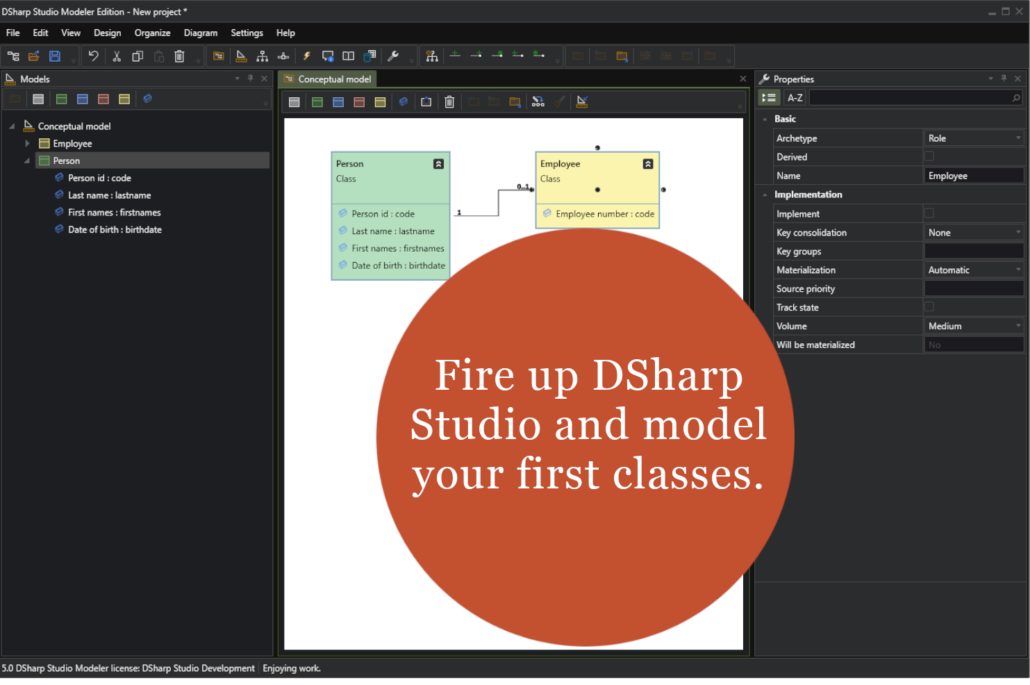

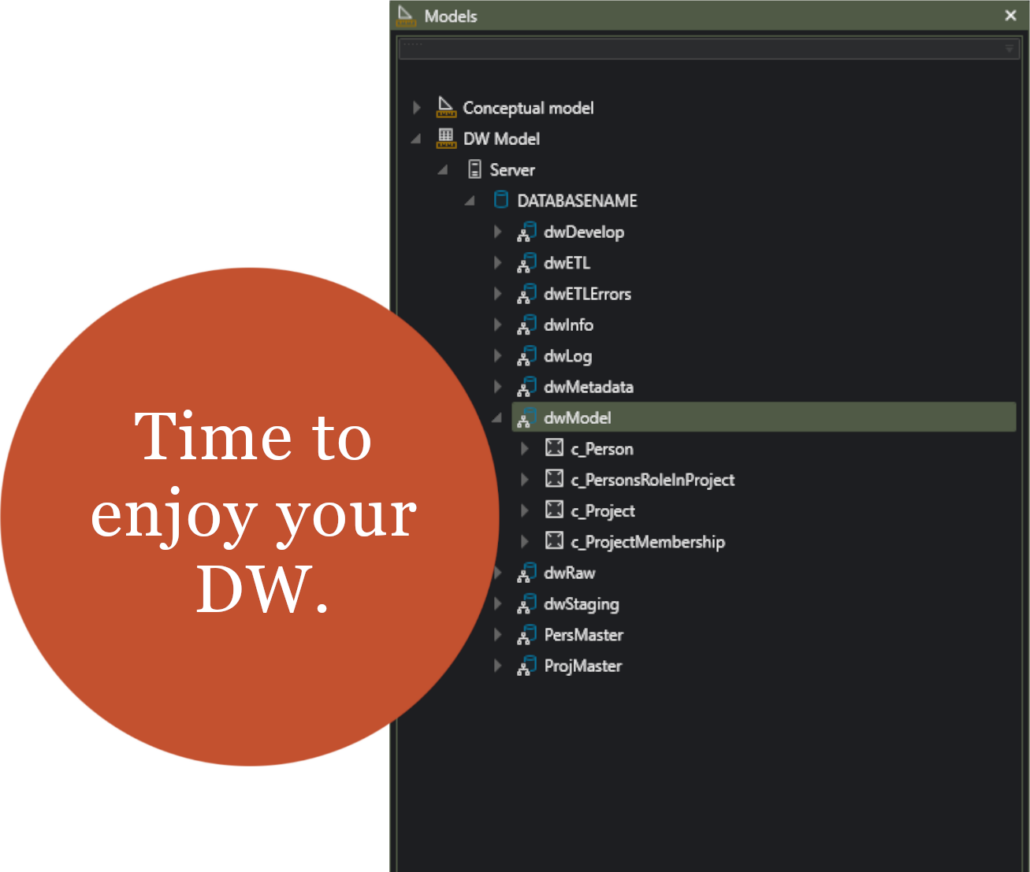

You start with modeling business needs, i.e., you create a Conceptual Model.

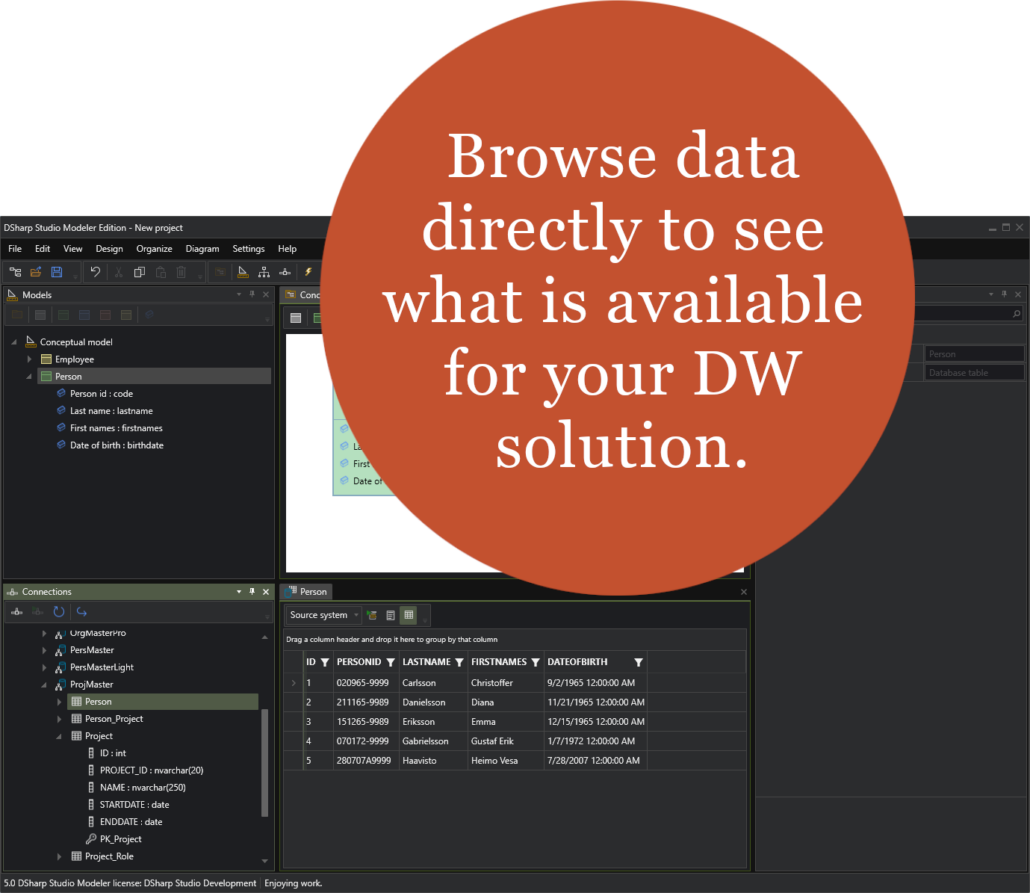

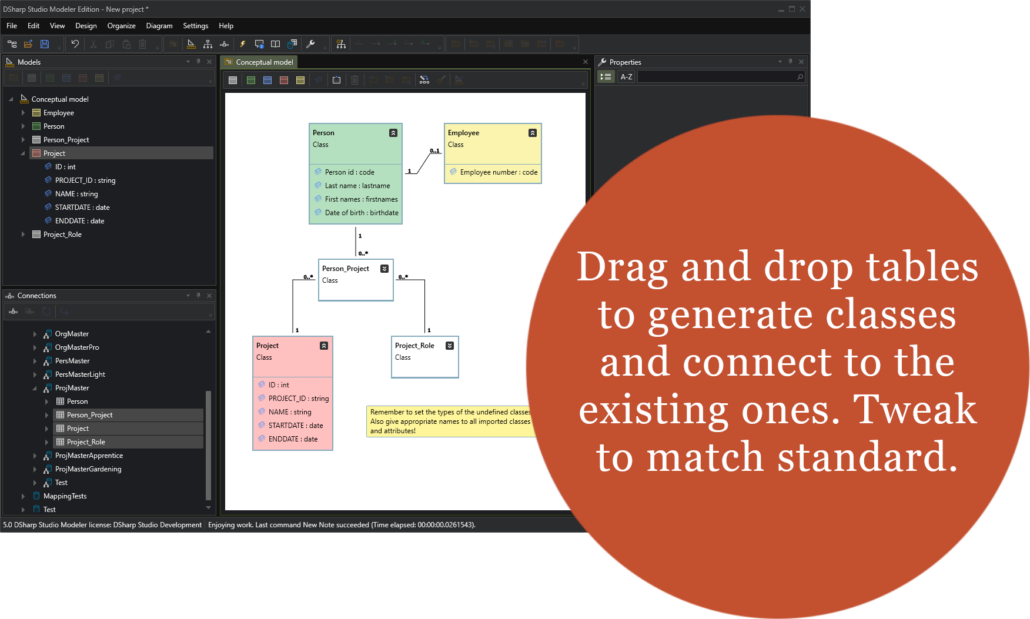

Then you map the data to the created Conceptual Model.

The rest is automatically created without scripting, further mapping, templates, or maintenance.

👉 Take a look below at how easy it is to create a Data Warehouse.

Studio modules

DSharp Studio Modeler is a powerful, Windows-based modeling tool for creating and managing complex real-world models.

DSharp Viewer is a free of charge application to view models. Comes with comprehensive documentation and support.

DSharp Studio add-on, providing a metadata database, automatic updates to the data catalog and an interface for external metadata.

DSharp Studio is an innovative no-code automation solution for building Data Warehouses.

DSharp Studio is designed to minimize manual development and maintenance work by leveraging the expressiveness of Conceptual models. Specify the information you need in your solution by creating your fit-for-purpose Conceptual model, connecting it to source data, and tweaking some details, and you’re done. DSharp Studio converts this less detailed conceptual model to a more detailed physical database solution that can store, process, and publish fully compliant data with your original model.

DSharp Studio includes the full modeling capabilities of DSharp Modeler, which are also available separately. In addition, it features a powerful analysis feature that almost does the work for you and, most importantly, an almost magical conversion mechanism that definitely does the work for you.

Key features

- Faster Development: Utilizing metadata-driven automation, DSharp Studio significantly speeds up Data Platforms development by generating SQL code automatically based on the Conceptual models, eradicating manual coding efforts and associated errors.

- Broad Compatibility: Our solution supports multiple target platforms such as Microsoft SQL Server, Azure SQL Database, Fabric and PostgreSQL, providing the flexibility to work with your preferred Data Platform technology.

- Scalability and Flexibility: The solution generated by DSharp Studio is capable of handling growing data volumes and adapting to changing business requirements, ensuring that your Data Platform remains agile and future-proof.

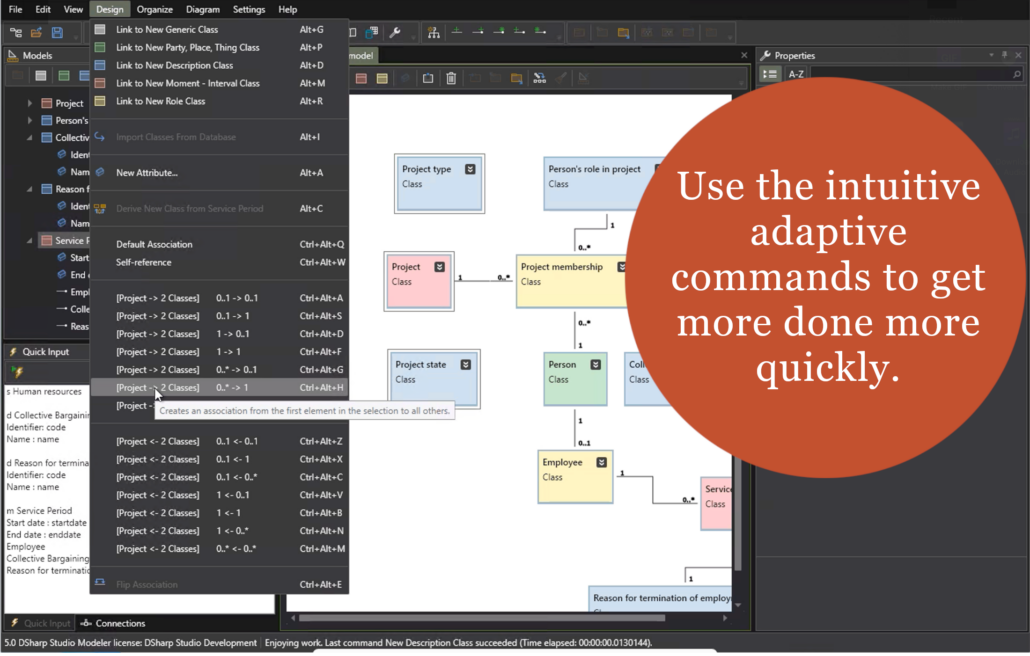

- User Interface: DSharp Studio offers a straightforward user interface with dedicated UI components for structuring the model, editing model element properties and building the actual model content. The commands available in the menus and toolbars have been carefully designed for the single purpose of being able to deliver with one click what would take multiple user actions in other similar modeling tools.

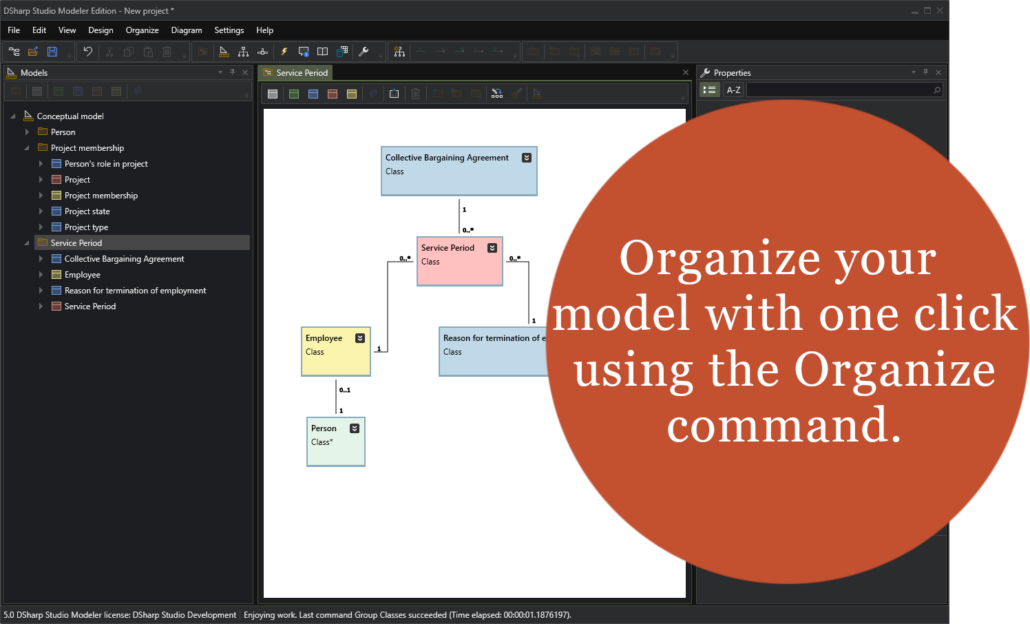

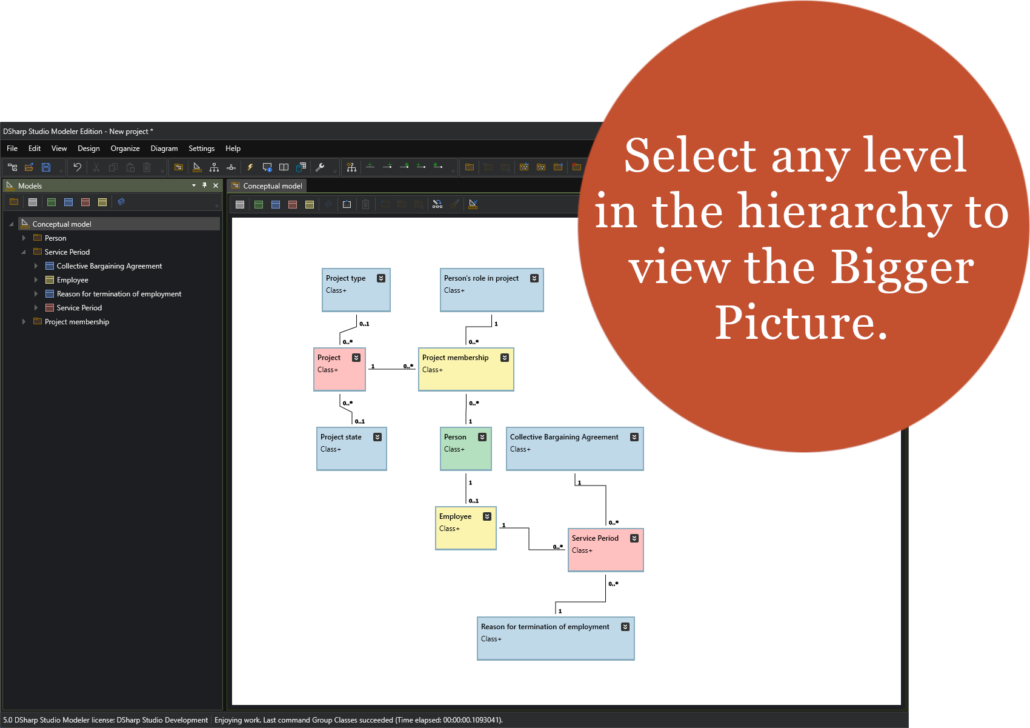

- Model and Submodel Management: DSharp Studio enables structuring your classes using submodels. This hierarchical structure allows for detailed and focused partition and analysis of different subsystems or domains within a larger model, enhancing clarity and comprehensibility. At its core, DSharp Studio actively encourages this divide-and-conquer approach by providing automatic grouping functions of related classes into their own submodels.

- Model Editor: The Model Editor is a central feature where the actual modeling work takes place. Users can create classes, attributes and associations directly within this editor, leveraging functionalities like intelligent element creation and automatic cleanup features to streamline the modeling process.

- Importing Models: DSharp Studio facilitates the importing of models created with other tools, showcasing its interoperability and flexibility by integrating with existing workflows.

- Database Connections: The tool supports data connections, allowing users to browse data and to generate models directly from database schemas. This serves both the data driven and the business-driven design approach.

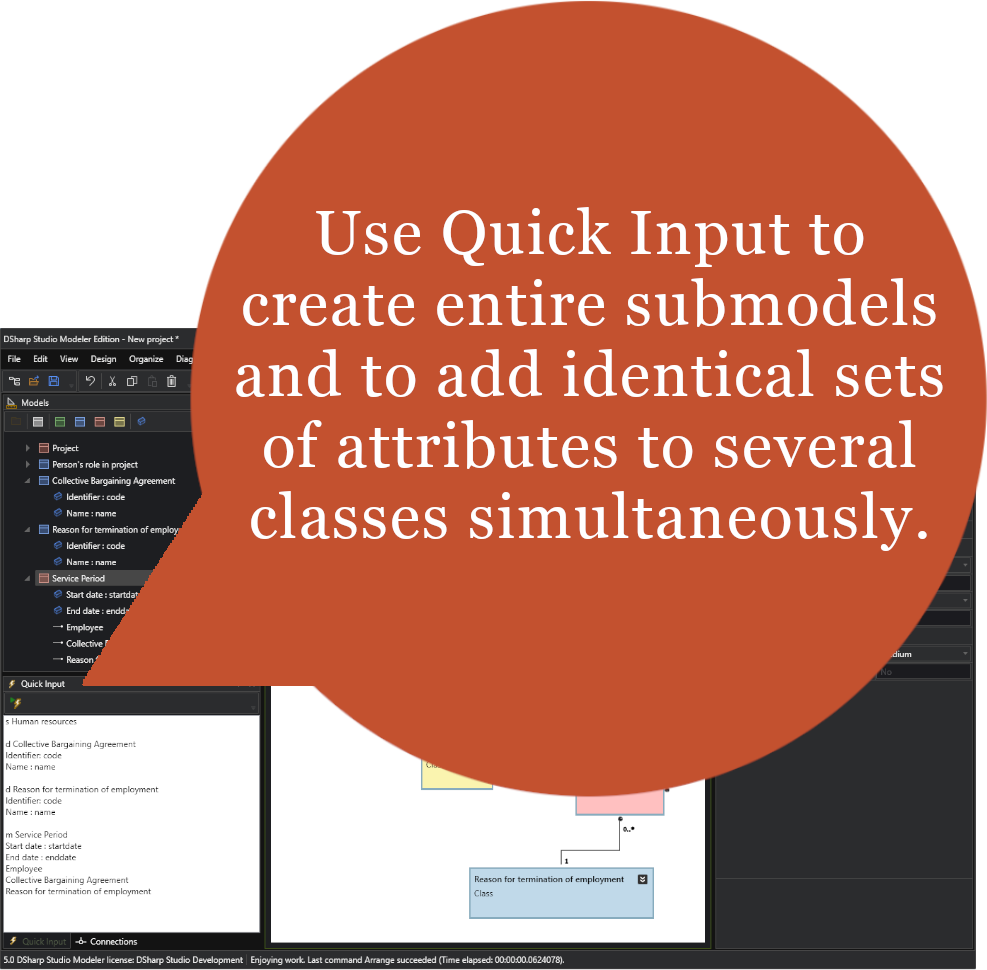

- Quick Input and Efficiency Tools: With features like Quick Input, users can rapidly define model elements using a simple syntax, significantly speeding up the modeling process. This, along with adaptive menu and toolbar commands doing more in less time, underscores DSharp Studio’s emphasis on efficiency and user productivity. A Power User is truly that.

- The Analysis Engine: The real-time class analysis works like a to-do list: it displays issues that need to be resolved before a class is ready for implementation, and it also provides one-click solution alternatives for each issue to be solved right there and then.

- DW Conversion: The user can select any subset of classes from their model for DW conversion. Running the model runs an additional set of analyses, making sure only valid classes enter the conversion process.

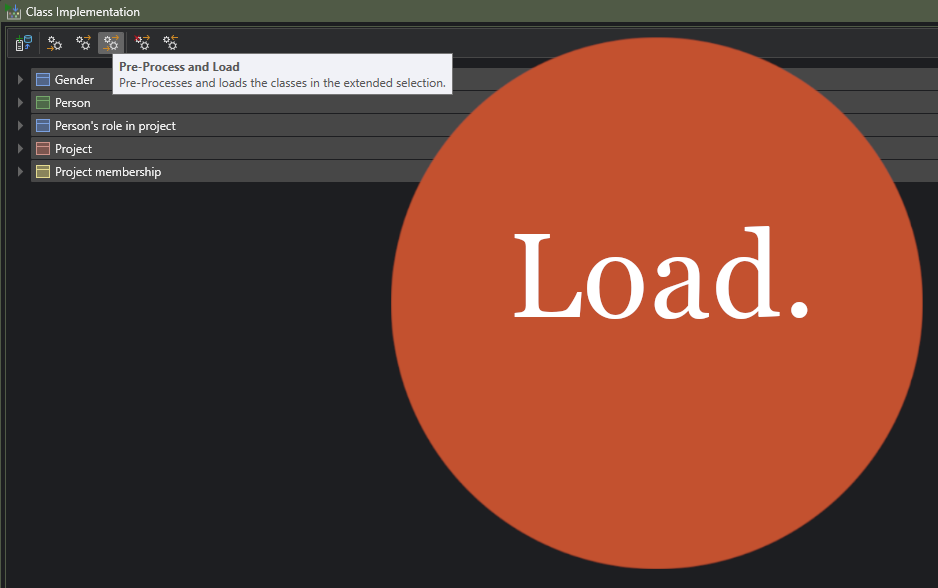

- Class-Based Actions: The user does not need to know the physical model to access it. All data or structure related tasks can be performed by simply selecting the classes of interest and then running the desired command from the menu or task bar. DSharp Studio knows the table structure behind the classes and builds the SQL code accordingly, in real time. Tasks like deploying new classes to the DW solution, loading the DW with new data, querying the data of a class, clearing and dropping tables as well as data analysis are all just one click away, starting from any class within the Conceptual model. For all practical purposes, the physical model has been abstracted out, and the user can perform most, if not all, tasks purely within the Conceptual model.

- Run Mode Diagrams: The user can visually explore the class or table structure using Ad Hoc diagrams, as well as explore the data lineage in Data Flow diagrams.

- Comprehensive Documentation and Support: We provide dedicated support and extensive documentation to ensure you have the resources needed to fully leverage the capabilities of DSharp Studio in conjunction with your chosen modeling tool.

Discover the advantages of DSharp Studio in accelerating your Data Platform development and maintenance. Equip your organization with a modern, efficient, and flexible Data Platform Automation solution, unlocking valuable insights to drive your business forward.

See the detailed features here.