What We Learn

How to handle data sets that contain legitimate duplicates.

Mapping

Let’s continue with the HumMaster expansion. Let’s take a look at DS_DemoData.HumMaster.SALARY_PAYMENT_PART:

Every time an actual payment is sent out to the employee, these rows get recorded. Payments made with the same date to the same employee represent one payment/transaction. What’s noteworthy is that there are two identical rows, and they are both valid (two bonuses the same size), so it is not possible to define a natural key for this data. Hence, the Salary payment part class has neither a Business key nor a Primary key. To deal with this, we will set the Duplicate handling metadata parameter to generate unique hashes based on all the values on the row, plus a running index number within identical rows. The data is also transactional in nature, as the same payment never happens more than once.

Mapping And Modeling

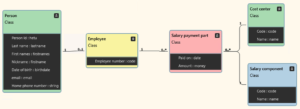

Model the remaining two classes like in the image above (re-use the Cost center class):

Set Implement to True for new classes. Additional model details:

| Do this… | …and this happens |

|---|---|

| Set Salary component.Code as Business key. | Same old, same old… |

| Set Salary payment part to be a Transaction. | Salary payment part will be implemented by a non-historized link. |

| Set Salary payment part’s Duplicate handling to Force uniqueness. | While hashing, all identical rows will get a running index which will be included in the hash. Hence all hashes will be unique. |

Export the model to your working directory and refresh D# Engine.

Having already implemented Employer, the rest of the mappings could go like this:

| Source table… | …goes to the Staging Area as… | …and maps to the Class |

|---|---|---|

| DS_DemoData.HumMaster.SALARY_PAYMENT_PART | HumMaster.RAW_SALARY_PAYMENT_PART | Salary payment part |

| DS_DemoData.HumMaster.SALARY_COMPONENT | HumMaster.RAW_SALARY_COMPONENT | Salary component |

Add the necessary mapping rows to the 05_Mappings_HumMaster.csv file and save it.

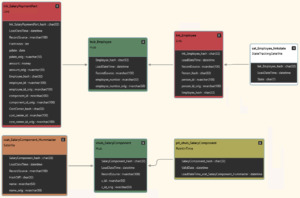

Refresh D♯ Engine. Select the HumMaster folder and press the Table Structure button. Your Raw Vault should look like this:

If so, deploy.

Run Tutorial Scripts

Run the following tutorial script commands fron the Help -> Tutorials -> Intro Course -> Hash Duplicate Handling menu, and inspect the results.

| Script | Source data | Main points of interest |

|---|---|---|

| Step 1: Load Salary Data | There are real duplicate rows. | Among identical rows, the hashing procedure has generated a running index number to be included in the hash, making the hashes unique. |