What We Learn

Handling hash errors so that they do not crash the target table load process.

If the hashing process produces non-unique hash values, the next step (load into hub, load into satellite, load into link) will fail due to an attempt to insert duplicate primary key values into the target table. Letting the load crash may be a valid way to deal with the bad data; the crash and the reason for it will be registered in the log, and can then be dealt with. The data in the target table has not been updated at all, leaving it in the same state it was in before the failed load attempt, so it contains no incomplete partially loaded batches. This portion of the load will not succeed until the error has been corrected in the source system.

If you want to enable loads that loads valid rows but skips invalid ones, you can choose to do that by using D# Engine’s automatic duplicate hash handling mechanism. This is useful particularly during development, when you want to load at least some data in order to be able to get the Info Mart development going. In a production environment you should have an agreed-upon process in place for handling bad data.

How It Works

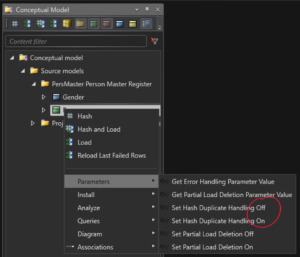

To enable this functionality, in D# Engine select the class (or classes) you want enabled for hash tests and run the command Parameters.Set Hash Duplicate Handling On found in the popup-menu for the selected classes. This sets the appropriate parameter value in the ETLParameters table to 1.

Don’t do this yet, wait until you have completed Step 1 of the tutorial scripts below.

The error handling procedure will check the value of this parameter, and move all rows containing duplicate hashes from the working table to the error handling table. Once all offending rows have been removed from the working table, the next steps (load hub, load satellite, load link) in the loading process will proceed without errors.

The rows in the error table can be checked and reported back to the customer. Additionally, they may also be processed so that the rows containing obviously wrong data can be dropped from the error table, and the remaining correct (and verified to be so!) rows can then be moved back to the working table and loaded successfully into the raw vault.

The same duplicate hashes will be generated in each load batch until the error has been corrected in the source system. However, the impact of these errors may be zero if the correct rows have been previously manually cleaned and loaded into the DW.

Run Tutorial Scripts

Run the following tutorial script commands fron the Help -> Tutorials -> Intro Course -> Hash Duplicate Handling menu, and inspect the results.

| Script | Source data | Main points of interest |

|---|---|---|

| Step 1: Load Persons With Duplicate Data | There are duplicate rows for Immonen. | Hashing will produce duplicate person data, and the subsequent loads will fail. Neither Immonen nor Jansson will be in the DW, since the entire batch failed. The root cause is that Immonen is present in the data twice. One of the Immonen rows contains the wrong gender code (1 = male). While debugging, the code meanings can be verified from the K_Gender view.

At this point, turn the Hash Error handling parameter value on for the Person class, as instructed above. |

| Step 2: Reload Persons With Duplicate Data | There are still duplicate rows for Immonen. | Hashing will produce duplicate person data, but the duplicate rows will be moved to the error table, and the load will proceed without them.

Jansson will exist in the DW, but Immonen won’t. |

| Step 3: Manually Correct Error And Reload | Trim the error table so that only error free rows from the last batch remain. | Do this step by step:

– Drop the bad data rows from the errors table. The SQL code for this found in the script. – Run the Reload Last Failed Rows command, which will re-insert the last remaining rows from the error table into the working table, and load them into the DW. Note that Immonen is in the DW. |